Digital Frictions

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.

We are celebrating 15 years — and counting — of stories that are deeply researched and deeply felt, that build a historical record of what the city has been.

A new assemblage of digital tools promises to reduce friction in the management of urban real estate — to help prospective renters virtually experience potential units without the hassle of trekking all over the city; to help landlords ensure that those potential renters will be good neighbors and responsible tenants; and to enable those new renters, their arms encumbered with moving boxes, to glide right through their apartment buildings’ secure entries. Property technology, or “proptech,” promises easy passage through, and close monitoring of, buildings networked via sensors and cameras to biometric databases and screening platforms. Yet as Erin McElroy demonstrates, proptech’s promises of security and convenience tend not to apply to poor and working-class tenants of color, who are instead finding themselves targeted by what are fast becoming new instruments of surveillance and harassment in housing complexes across New York City. – SM

In 2018, many of the rent-stabilized tenants at the two-building, 718-unit Atlantic Plaza Towers received notice from their landlord, Nelson Management, that their wireless key-fob entrance system would be replaced with biometric facial recognition technology. Even though they had been required to submit photos of themselves in order to obtain fobs in the first place — and despite the presence of other surveillance systems throughout the complex, including multiple CCTV cameras — tenants were informed that this new system would ensure their safety by keeping keys out of the hands of “the wrong people.” Marketed as the True Frictionless™ Solution, this new facial recognition system was developed by the Kansas-based company StoneLock, which serves up to 40 percent of Fortune 100 companies, along with several government entities. This is the first publicly known instance of StoneLock endeavoring to deploy its facial recognition product in a New York City housing complex, though biometric technology, developed by other companies, has already been installed in residences throughout the city, such as the Knickerbocker Village affordable housing complex in the Lower East Side. Not coincidentally, StoneLock’s first foray into residential facial recognition will be put to use in surveilling predominantly Black women tenants, many of whom have questioned Nelson Management’s decision to test the company’s product in in Brownsville, Brooklyn, as opposed to one of the landlord’s other properties in a more affluent area of the city.

Like dozens of other surveillance systems being rolled out in multifamily residences, commercial buildings, and industrial complexes globally, StoneLock’s True Frictionless™ Solution is part of a burgeoning class of property technology, or proptech for short (also called real estate technology, or realtech). The last several years have seen a proliferation of proptech companies and platforms reshaping multiple domains of urban life, including the provision, consumption, and management of residential space. Often, proptech entails some configuration of artificial intelligence (AI), the Internet of Things (IoT), machine learning, user dashboards, software, data harvesting, and hardware. It can be difficult to categorize its multiple genres, particularly as many are combined, but proptech can be roughly taxonomized as rental housing management (tenant screening, payment, and maintenance), smart home development, keyless entry surveillance systems, sharing economy platforms, virtual reality-based home sales and rentals, tenant matching, and property database platforms. While aligned with “smart city” rhetoric, proptech makes explicit that private property relations are at the heart of its technological innovations, with companies in this sector catering to landlords (both private and public) who seek to automate the management of their portfolios.

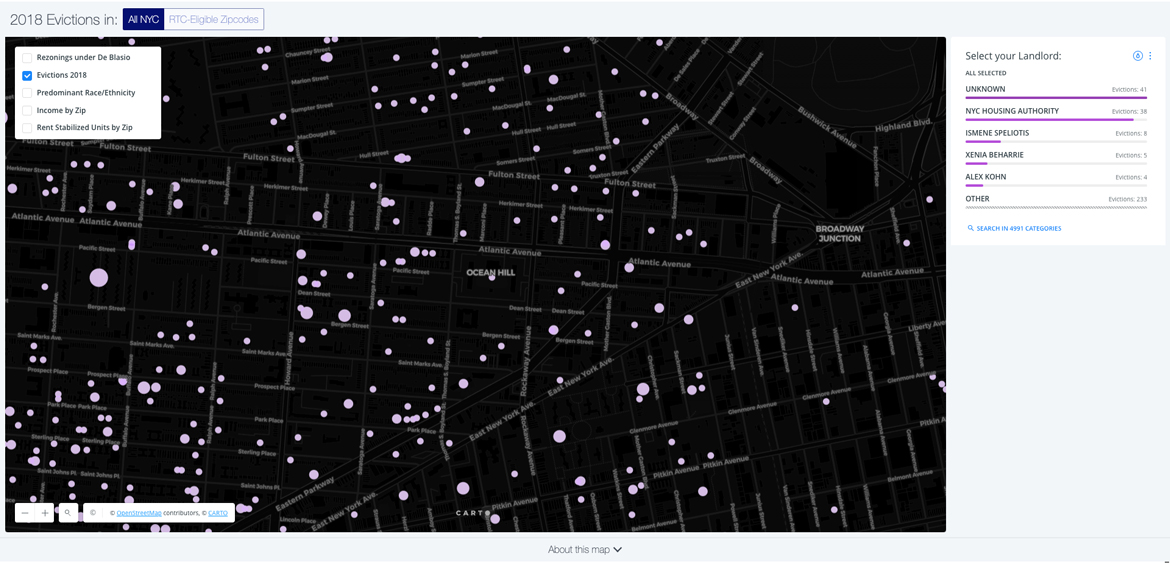

My interest in these emerging technologies, and their often-negative impacts, comes out of longstanding tenant organizing efforts that I have been involved with in the San Francisco Bay Area and in Romania. It is also inspired by research undertaken by a digital cartography collective, the Anti-Eviction Mapping Project (AEMP), which I cofounded in 2013 in San Francisco (and which now maintains chapters in New York City and Los Angeles as well). Across multiple cities, I have witnessed and analyzed how real estate and technology platforms often work in conjunction to displace and target poor and working-class tenants of color. Proptech extends this tendency, while also signaling the merger of two leading global industries, Big Tech and Real Estate, that hinge upon the accumulation of property — data and land, respectively. Proptech collapses these two property regimes, leading to the heightened dispossession of people long targeted by both.

At a recent proptech conference I attended near Wall Street, aficionados of the technology used the word “friction” a dozen times, always likening it to a slowness or hindrance to be overcome through technical means. “Frictionlessness,” on the other hand, implies ease, cost cutting, and the ability to capitalize upon the consumer desires of young, affluent people — for instance, smart buildings, fast internet, and integration with delivery services. As Robert Nelson (the owner of Nelson Management and a self-described “tech geek”) proclaimed to his tenants by way of a flyer: “Your daily access experience will be frictionless, meaning you touch nothing and show only your face. From now on the doorway will just recognize you!” And as StoneLock advertises, their products (including, of course, the True Frictionless™ Solution) will provide users with “frictionless access,” so that they “just GO!” A key corollary to “frictionlessness” in proptech parlance is “safety.” Ari Teman, a former comedian and engineer who created the “virtual doorman” system GateGuard, which utilizes facial recognition, has defended proptech by advocating that “surveillance can make you feel safe,” and suggested that his product enables tenants to keep illegal subletters and unwelcome people from entering their building.

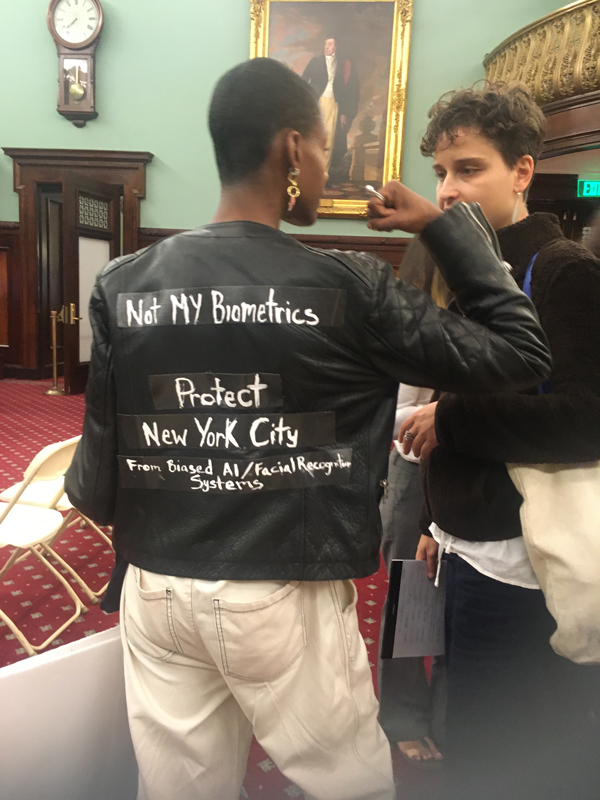

Yet proptech’s “frictionless” and “safe” qualities come at the cost of accountability to its users and the public. Beyond causing discomfort and concerns about privacy, proptech presents novel threats to the safety and stability of vulnerable tenants. GateGuard has been installed in roughly 1,000 residential buildings throughout New York City, although Teman (like other proptech developers) has refused to publicize these locations. Likewise, during a City Council hearing I attended in October 2019, city agencies (including the Department of Information Technology, Department of Buildings, and Department of Consumer and Worker Protection) claimed to have neither any knowledge of where residential facial recognition is installed, nor the capacity to map it. And though the purpose of this hearing was to discuss requirements for businesses and residences to disclose their use of “biometric identifier technology,” and to provide physical keys to tenants if requested, it has become clear that while proptech maintains an opaque public profile, most people monitored by these technologies don’t get the option of consenting to being a test subject.

In 2014, entrances throughout the twelve-building Knickerbocker Village complex — regulated by New York State Homes and Community Renewal (HCR), the state’s affordable housing agency — were outfitted with FST21 SafeRise, a facial recognition product created by former Israel Defense Forces Major, General Aharon Farkash. During the City Council’s hearing, one Knickerbocker Village tenant named Christina Zhang recounted stories of how this system has been implemented in her building. For one, the technology often simply fails to work properly, forcing tenants to line up and dance in front of the cameras, hoping that their movements will inspire recognition. But of greater concern, the complex’s tenants — 70 percent of whom are Asian, and many of whom are immigrants — have no idea what the data being collected from them is used for, and have expressed fears for their biometric information ending up in the hands of the NYPD or ICE, both of which are known to use facial recognition and surveillance technologies to identify suspects and track undocumented people.

Several tenants from Atlantic Plaza Towers testified at this hearing as well, voicing unease about proptech’s harvesting of biometric data. Yet efforts to organize against the use of facial recognition had been brewing in the Brownsville complex for over a year. In 2018, when Nelson Management announced the installation of the True Frictionless™ Solution via paper mailings, many tenants were left in the dark. A prior stipulation had required residents be photographed in order to receive mailbox keys, and some had refused this request. In response, five tenants, all Black women, convened in the lobby of one of the buildings on an October morning to inform their neighbors in person about StoneLock’s system. Soon after, these women received notice from Nelson that they had been recorded by the lobby’s half-dozen, 360-degree cameras, and were incorrectly informed that their “loitering” was illegal and would have to stop. A follow-up announcement of the impending facial recognition system was then made public, listing the name of every tenant and their apartment number in the building. One tenant named Anita, who had been flyering in her building, decried this incident during the City Council hearing: “Privacy be damned!”

Resident Fabian Rogers, who has been on the frontlines of the campaign against facial recognition, also described his experience living with the technology: “I had many concerns as a tenant, and security was not one of them. It was the landlord’s concern, and it was imposed on me. I already feel well enough surveilled with all the cameras and key fobs that exist. I kind of feel like a criminal even though I pay my rent on time.” Beyond taking issue with the security theater of facial recognition, tenants expressed little faith in Nelson’s claim that their biometric data would remain secure, and speculated that, if facing threat of eviction, this information could be potentially used against them in housing court. For these reasons — and because StoneLock’s system will give the company access, without tenants’ consent, to nearly 5,000 new faces with which test its algorithms — Atlantic Plaza Towers tenants have been advocating for a ban on facial recognition in the city altogether.

These tenants realize the technological changes being imposed upon them are not for their benefit, but for the “frictionless” experiences and “safety” of future gentrifiers yet to arrive. Atlantic Plaza Towers was built for middle-income families as part of the state-run Mitchell-Lama program in the 1950s, and it remains relatively affordable today, having just gained rent stabilization status two years ago. Yet with the ongoing gentrification of Brownsville and increased number of evictions, affordable housing and services are increasingly harder to come by. As Anita observed, “Tenants have so many issues that need to be addressed, but now we’re dealing with this . . . So poor people like me can’t live here anymore. I’m pissed at what’s going on. So many people in the neighborhood are being pushed out . . . Please consider this a tragedy waiting to happen.”

While the struggle at Atlantic Plaza Towers has drawn public attention to how proptech can amplify tenant insecurity, the database systems that proptech hardware feeds into and supplies with new information, biometric and otherwise, remain obscure. For instance, Ari Teman’s GateGuard has the ability to integrate with one of his company’s other products, PropertyPanel.xyz, a proprietary database and dashboard platform for New York City landlords to use in acquisitions and property management. PropertyPanel.xyz allows purchasers to gather an array of information about buildings, and to “target” properties based upon value, debt, rent stabilization, ownership, air rights, size, and other criteria. Purchasers can also obtain alerts to violations and complaints, communicate with building staff, screen vendors, and are given the option to integrate PropertyPanel.xyz with yet another Teman product, SubletSpy, which monitors Airbnb tenants for potential infractions. Upon purchase of GateGuard, landlords and property managers consent to Teman accessing “any property of yours, digital or real world, in any method, for any purpose,” including for the purposes of plugging data into PropertyPanel.xyz. No other clarifying information is given as to how this data may be used, or how it might facilitate the training of biometric algorithms.

Before inventing this suite of interconnected proptech products, Teman first created the startup Friend or Fraud Inc., which developed software to verify internet users’ identities through video-analyzing machine vision, replete with breath and heartrate monitors. Today, he employs a team of workers across the US, Israel, and Eastern Europe who assist 100 landlords and property management companies in New York City, Miami, Chicago, and Los Angeles. Teman first invented SubletSpy in 2014 following an Airbnb experience in which, after renting his New York apartment on the platform, he returned to find the remnants of a well-attended sex party. Teman filed a complaint to Airbnb, but rather than resolving the matter, this action landed him on a “bad tenant” database, making it nearly impossible to find a new apartment in the city.

These inscrutable databases are often compiled by third-party “data brokers,” who supply a vast number of individuals’ personal information to landlords, marketers, and government agencies. In some instances, these brokers operate public platforms such as MyLife.com™ which gathers information from Facebook, LinkedIn, Twitter, Gmail, Yahoo, AOL, Outlook, school yearbooks, Ancestry.com and more to assign “reputation scores” to a claimed 325 million “verified identities.” Other prominent data brokers such as Oracle, Experian, and Equifax buy and sell personal information related to a renter’s credit history. Recently, it was revealed that Experian offered to raise users’ FICO scores in exchange for credit card passwords, allowing the company to scan a user’s purchase history into their databases and sell this information to third parties. Not only is credit reporting often discriminatory (particularly in regards to mortgage lending and rental payment history — an issue amplified during the 2008 subprime crisis), but like information brokerage at large, it alienates and reduces individuals into disaggregated data points. There have also been numerous instances of personal and biometric data being sold (and occasionally hacked) by third parties without consent or even the knowledge of the individual supplying the data.

Proptech companies such as Avail and Cozy (both marketed to small-scale, individual landlords) have entered into this space as well, developing digital products and platforms for the express purpose of screening tenants. CoreLogic, headquartered in California, has developed one of the most comprehensive residential database and tenant screening systems, with records spanning 50 years, 145 million parcels, and 99.9 percent of US property records. Their access to arrest records spans over 70 percent of the US’s population centers, and interfaces with law enforcement agencies throughout the country. Updated every 15 minutes, this system includes over 80 million booking and incarceration records from roughly 2,000 facilities. CoreLogic also sources and returns data from the FBI and other federal agencies, promising to enable landlords in identifying “terrorists.” Furthermore, the company’s Registry CrimSAFE product bundle advertises its ability to seamlessly implement landlord policies, and “optimize” Fair Housing compliance. Yet, since releasing CrimSAFE, CoreLogic has been faced with a lawsuit over its algorithm which, according to the Connecticut Fair Housing Center, “disproportionately disqualifies African Americans and Latinos.” Meanwhile, much of the data being mined by CoreLogic, especially that maintained by law enforcement, is plagued with inaccuracies and racial biases.

While it is unclear exactly which database system Teman appeared on, it is clear that, as a wealthy white man, he is not the type of person generally profiled by proptech database bundles. But in response to his own blacklisting, rather than getting involved in racial or data justice work, Teman chose instead to invent SubletSpy. Thus, Ari Teman, himself once a victim of proptech platforms and databases, weaponized tenant profiling technology for his own personal gain.

While they may not have Teman’s resources, housing and technology justice advocates and their allies have been pushing back against proptech’s embedded biases and racist effects. In May 2019, Brooklyn Legal Services (BLS), a legal non-profit representing Atlantic Plaza tenants in their struggle against surveillance, composed a letter to HCR noting that facial recognition indicates a dramatic shift in Nelson’s prior practices of landlordism. One BLS lawyer, Samar Katnani, has further argued that “the ability to enter your home should not be conditioned on the surrender of your biometric data, particularly when the landlord’s collection, storage, and use of such data is untested and unregulated. . . We are in uncharted waters with the use of facial recognition technology in residential spaces.”

Meanwhile, scholars at New York University’s AI Now Institute (an interdisciplinary research center dedicated to studying the social implications of advanced technical systems, of which I am a part) also wrote an expert letter in support of the tenants, describing how facial recognition systems for residential entry are bound to fail in accurately identifying tenants of color, women, and gender minorities. Numerous studies have shown that machine learning algorithms, disproportionately built and trained by white men, discriminate on the basis of gender and race, with women of color misclassified with error rates of nearly 40 percent, compared to one to five percent for white men. AI systems, particularly for facial recognition tools, rely upon machine learning algorithms trained with data. Physiognomic labels related to hair, skin, facial structure, and more are codified into racial and gender classifications, echoing 19th-century, pseudoscientific ideas about race and eugenics. And while facial recognition algorithms have been shown to be largely inaccurate in identifying women and Black people, it is still Black people being targeted most by them, and stopped and subjected to searches in facial recognition databases by police, often resulting in false positive identifications. This has led cities such as San Francisco, Oakland, and Somerville to recently ban the use of facial recognition by government agencies altogether.

The pressure applied by Atlantic Plaza Towers tenants has helped paved the way for Brooklyn-based Congresswoman Yvette Clarke to introduce a bill in the US House of Representatives named “No Biometric Barriers to Housing Act” that would prohibit facial, voice, fingerprint, and DNA identification technologies in public housing. The bill would also require the US Department of Housing and Urban Development (HUD) to report on biometric systems used in federally-assisted public housing in the last five years. Despite these potential legislative gains for public housing tenants, HUD has recently proposed alarming alterations to the 1968 Fair Housing Act (FHA) — an offspring of the civil rights era outlawing housing discrimination against people of color. The Fair Housing Act requires local governments that receive HUD funding to address segregation, disinvestment, and displacement in their communities. But as investigative reporters Aaron Glantz and Emmanuel Martinez write, under new proposed regulations, a company accused of discrimination in housing “would be able to ‘defeat’ that claim if an algorithm is involved.” In this way, the “gentrifier-in-chief,” president of the “real estate state,” has made himself the new vanguard of racist proptech algorithms.

Following the October 2019 City Council hearing on facial recognition, New York City agencies may implement changes to mitigate facial recognition’s impacts. But as the tenants of Atlantic Plaza Towers eloquently made clear, their demand is not for band-aid mitigations, but for a ban on facial recognition in New York City. The presence of already-existing security measures made tenants in Atlantic Plaza Towers feel policed in their homes long before their landlord introduced the possibility of facial recognition. Against the backdrop of gentrification, the insinuation of criminality and evictability are often used to maintain what Brenna Bhandar (citing legal scholar Cheryl Harris) calls the “whiteness of property.” According to Bhandar, the ownership of property in places marked by the histories of colonialism and the slave trade is rooted in a “modern racial regime” dependent upon the dispossession of real property and data. Proptech has the potential to accelerate both forms of dispossession through the non-consensual mining of, and capitalizing upon, people’s intimate data — what can be described as “data colonialism” — which updates processes of settler colonialism and occupation for the digital age. Yet it also sits upon a thick palimpsest of older property schemas and the information systems supporting these regimes. One could trace proptech’s lineage back to the earliest moments of settler colonialism and the technologies it employed to gather data, map land, and dispossess Indigenous populations, or more recently to the redlining of communities of color during the mid-20th century. Given the potential for racist abuses in the future, and the inability of the city, landlords, and proptech companies to make transparent where and how new, “frictionless” tools function, a ban is the only just future — friction-filled as it may be.

This research has received support from the British Academy's Tackling the UK's International Challenges program. The author would like to thank the brilliant tenants of Atlantic Plaza Towers for their organizing work and analysis, and the AI Now Institute and Rashida Richardson for feedback and support in writing this article.

The views expressed here are those of the authors only and do not reflect the position of The Architectural League of New York.

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.