Digital Frictions

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.

We are celebrating 15 years — and counting — of stories that are deeply researched and deeply felt, that build a historical record of what the city has been.

Humans have long had rudimentary tools — from fire to locks to lightbulbs — that regulate architecture’s ability to provide security, climate control, and illumination. Yet the logic of “smartness” dictates that we digitize and network everything that can be digitized and networked — even those analog tools that work quite well in their “dumb” simplicity. Here, Dan Taeyoung examines how the integration of smart technologies into cooperative working environments doesn’t simply provide convenience and efficiency for individual members. Instead, it radically transforms an entire ecology, introducing new social and technical frictions, and perhaps even diminishing the collective intelligence of architecture’s inhabitants. – SM

Altering a space means navigating the organizational structure of its inhabitants. For example, the furniture layout of a large office may only be alterable by a small number of workers in a management hierarchy. In a shared apartment, moving a piece of furniture often requires social agreement from everyone else who lives there. Spaces are social ecosystems that shape us, and are shaped by us.

“Smart” building technologies are here, entering into and altering these ecosystems. They promise convenience, security, efficiency, and automation: doorbells that record movement, thermostats that already know your desired temperature, and control “at the tap of a phone” or at your verbal command. How exactly do networked objects alter spatial and social ecologies?

What follows are examinations of how a smart doorbell and thermostat have been integrated into two collective community spaces I was deeply involved in creating and cultivating — Soft Surplus and Prime Produce. I care greatly about these spaces, where members hold events and workshops, practice personal and professional work (and play) — and in the process, collectively organize and share decision-making power and agency. We reduce hierarchies through transparency about power and finances, and attempt to be thoughtful and just in creating “community infrastructure.” I consider these spaces to be almost like community gardens — where people tend to the environment and each other, and evolve together.

We have learned unexpected things in the process of experimenting with smart building technologies. Personally, I have learned that they are best understood as mediators of behavior and relationships, intersecting the social and material ecologies of the spaces they “inhabit.”

Soft Surplus was established to create a space for “learning things from each other by making things near each other.”[1] In a former automobile warehouse in Brooklyn, two dozen members or more — including architects, artists, dancers, biologists, teachers, professors, activists, aerialists, designers, and technologists — create projects, organize events, clean and fix the space together. Members also hold collective decision-making sessions called “gardening meetings” to decide on new members, discuss our budget, plan events, and of course, change our own decision-making process. Imagine that the space is like a communal kitchen: active and often messy.

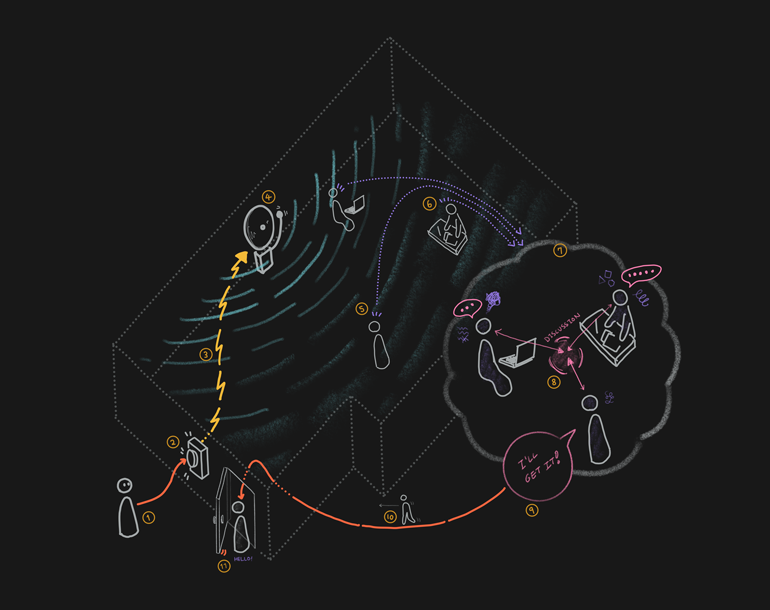

We regularly receive friends, deliveries, guests, and other visitors. When the doorbell rings, a chime sounds through the space, and someone needs to get up from their desk to open the door. Soft Surplus has no enclosed rooms, which means that it’s relatively easy to see or hear activity near the front door. Desks are located at different distances: It may take five seconds for one member to reach the door, or 20 seconds for another. As a result, when the doorbell rings, a short, rapid discussion occurs to decide who will interrupt their work to open the door.

A person might shout, “I’ll get it,” claiming responsibility for opening the door and alerting others so that nobody else has to get up. If someone has a guest or delivery that they’ve been expecting, they might say, “It’s for me!” and head to the door. When a person is in a particularly deep state of creative flow, they might delay their movement as an implicit request for others to get the door. And if someone has been opening the door frequently, they might get up slower, or verbally ask, “I’m sorry, but could someone else get the door?” — a request for others to take their turn.

Like many social interactions, this process is brief but complex. It involves listening, tacitly coordinating actions, and acknowledging others’ needs. Occasionally, the doorbell will ring, and nobody will move or say anything. I interpret this to mean that everyone is deep in their work, and silently signaling: “I’m sorry but I’m busy right now, could someone else get the door?” Or, when someone says, “I’ll get it this time,” immediately after a doorbell rings, they might be acknowledging the labor of friends who are close to the door and open it more often.

As a weekend project, I connected Soft Surplus’ doorbell to our private chat and messaging system, Slack, using a Wi-Fi-enabled microprocessor. This chat-enabled doorbell was a technologically simple rendition of a typical smart doorbell. When someone rang the doorbell, a chime would sound as usual, but it would also connect to the internet to send a notification to the chat system: “Someone has pressed the doorbell!” My intention for this technology was to reduce the potentially disruptive sound of the doorbell chime, and to redistribute the burden of opening the door.

After a short amount of testing, I realized that the networked doorbell was not smart, but quite the opposite. It was limiting — acting against the social “intelligence” of our space. Chat messages are received asynchronously. When you open the chat application and read a message that the doorbell has been rung, this could mean that someone has just pressed the doorbell — or that someone pressed the doorbell an hour ago, and you are only seeing it now. A negotiation around who opens the door becomes confusing because it is unclear whether or not someone else has already responded to the doorbell. To make matters worse, the chat notification is sent to every member, even if they are outside the space, cluttering everyone’s phone with notifications and reducing their perceived importance.

Let’s consider the technology of the “stupid” door chime: When the button at the door is pressed, it sends a signal to a speaker inside. These speakers vibrate rapidly, creating waves of air that propagate throughout the space. When these waves reach our ears, we perceive them as sound. This sound is also absorbed and scattered by the walls of the space, so that the door chime is not heard beyond its physical boundaries. If you hear the door chime, it means that you are physically present. In addition, the door chime is synchronous; members are notified at the same time, creating a context for quick verbal discussion. How smart!

Eventually, I removed the microprocessor to return our doorbell to its previous stupid state. Our chat-enabled doorbell was not a technological failure, but a system failure. It did not work with our social process, and the simple doorbell was more functional within our existing system, already mediated and formed by many different actors — objects, technologies, spaces, humans, interpersonal relationships, social norms. When a networked technology enters the fray, it engages with these different actors, modifying relationships and creating new ones, and ultimately changing the system. If a different smart doorbell benefits Soft Surplus, it will not do so because it is technologically advanced, but because its technologies will enmesh with the social system in ways that are better for everyone.

Prime Produce is a “guild for social good:” a nonprofit membership cooperative full of nonprofits, social entrepreneurs, educators, community organizers, as well as friends and neighbors. Members share the workspace, located in a two-and-a-half story building in Hell’s Kitchen, Manhattan.[2] We also hold regular meetings to govern, manage, and organize all aspects of the community, cook meals for each other, host guests, help produce events, and spend time with one another. Prime Produce is an intentional community — not a coworking space or incubator — because the decision-making power structures and the financial structures are not oriented towards profit accumulation, growth, or scale.

Thermostats are inherently social devices. Since temperature affects everyone in a space, changing the temperature usually involves some amount of social negotiation. If you are in a small shared apartment, you might casually ask others if it’s okay to open a window or turn on the air conditioner. If you are in a large space with many other people, changing the temperature of the space might involve some institutional process — holding meetings or speaking to an appropriate person.

Some institutions make organizational control over temperature explicit. They lock the thermostat into a plastic guard and have a facilities manager or administrator hold the keys. The moment the thermostat is placed in its guard and locked in with a key, controlling the temperature becomes a question of permissions and organizational access. The key mediates access, creating a division between those who have and have not been authorized to access the thermostat.[3]

Much like the locking thermostat guard, the smart thermostat restricts who has agency to shape the spaces they inhabit, and how this process unfolds. When a software system mediates the temperature control of a building, it also structures a social system according to its particular logic of authorization and access.

At Prime Produce, the temperature control system consists of two entangled feedback loops. The thermostat measures the ambient temperature. It turns the A/C on when it is too warm, and turns the A/C off when it is too cool, steering the ambient temperature towards equilibrium. Members also regulate their individual, desired temperatures through a social feedback loop. If it’s too cold or hot, someone might initiate a discussion out loud to adjust the temperature. When a person approaches the thermostat to change its settings, this is also a publicly visible action that generates negotiation and discussion. “I see you’re setting the space to be cooler — but I’m actually very cold right now.”

What does a technological interface for changing the temperature look like in a non-hierarchical space? There are currently no explicit rules as to who can or cannot control the thermostat at Prime Produce. However, in practice this sometimes means that only a few members feel empowered or enabled to change the thermostat. I wanted to experiment with changing this social dynamic through a collective voting system — to have the temperature control of a collective environment itself be explicitly democratic.

With this in mind, during a renovation, I coordinated the installation of networked thermostats and light switches. These thermostats and switches look the same as non-networked devices, but they create a mesh network that communicates with a base station. This infrastructure enables the lighting and temperature systems of the building to be controlled with physical interfaces, and remotely over the internet.

I then developed a temperature voting bot for our chat system. The bot would automatically post a poll to the chat, asking if anyone wanted to raise or lower the temperature. It would tally each vote as representing a 0.5F degree temperature change, and adjust the thermostat accordingly. For example, if three people wanted it to be cooler, and one person wanted it to be warmer, the bot would adjust the temperature downwards by one degree.

Technologically speaking, the temperature voting bot worked great, and it was fun. However, this system created social frictions too. Only some members seemed to engage with the voting process. This was troublesome, because it meant that unlike the physical thermostat, which is visible to everyone, this bot might actually make temperature changes opaque to those not following the process. In addition, we couldn’t find the right frequency for the voting bot to create polls. When the polls were infrequent, people would simply use the physical thermostat to control the temperature; when polls were too frequent, it was easy to ignore the bot’s messages as spam.

The software system I developed contained an operating logic that was difficult to change cooperatively.[4] Does one vote for raising the temperature really cancel out one vote for lowering the temperature? Should each person’s vote symbolize one degree, or should the collected results of the poll shift the temperature up and down by a single degree instead? There were so many different ways to structure the voting, yet I alone had chosen and coded a structure into the software system that was effectively alterable only by someone with the ability to code. While this voting bot might have worked for our purposes, I disliked the idea of a non-cooperatively controlled decision-making structure, and I disabled the voting bot.

Occasionally, a member would forget to turn off the A/C or heat, causing it to run all night in an empty space, which was both environmentally and financially harmful. This was another problem we tried to solve with networked temperature control. How could we create a system to turn off the A/C when it wasn’t needed? One possibility was to install motion sensors in various parts of the building, and to create a system of rules, like: “If the motion sensor in the room has not seen movement for 30 minutes, assume that it is not occupied and turn off the A/C there.”

However, I realized that the operation of this system would again hide the control logic of the building within a software system, and only one or two people who understood software development would be able to easily modify it. What happens when the collective wants to change the logic, but the people capable of changing it are busy? Or what happens in five years, when we’ve forgotten how the system works?

A software approach to this problem involves building an interface — for example, a website that can let a user change the operating rules of the HVAC system. The interface might include a password system, so only members have the ability to control the system. But who designs the logic of the interface itself? The interface may give someone permission to change the temperature. But who decides that the interface should have a specific permission structure? Shouldn’t all members be able to alter and rewrite the HVAC logic that shapes the collective environment?

Eventually, I created a Slack-based chatbot for controlling the temperature. Prime Produce members can ask the bot to set the temperature of any room in the space — which it does with a cheerful confirmation — and anyone can create a regularly occurring message. This chatbot works in the context of a cooperative social system. Someone in the space walks up and alters the thermostat after receiving consent from others in the space. The bot turns off all A/C systems at 7pm. People can add new commands relatively easily to change the HVAC schedule, and the interface to do so is not a separate website or login, but simply consists of editing a chat-based reminder within our existing messaging software — making decisions available for debate and discussion. Collective decision-making processes make it possible to change (or discard) this whole system.

This technology is networked. Is it smart? Does it even matter? The complex, rich ecology that we live in consists of entangled relationships between the HVAC system, the software system, the chat system, the social cooperative system, and the physical space. For Soft Surplus and Prime Produce, the ‘smart’ doorbell and thermostat were best understood not as technologies, but as social forces that relate to, enmesh with, and alter existing systems.

Imagine a verdant community garden teeming with plants, birds, pollinating insects, and people. This garden learns, grows, and changes, as the people who tend to it also learn, grow, and change in response to each other and their environment. This community garden is not architected or designed; it is continually altered in situ: an ecology of space, human relationships, and other living and non-living things.

Let’s imagine environments that embody convivial change and co-creation between a space and its inhabitants.[5] We could call these “lush spaces,” where inhabitants shape, build, fix, alter, and create their own surroundings, whether through technology, spatial design, or organizational structure. Lush spaces are more than smart. Physical objects, technologies, and social systems are entangled together to create ecologies of social interaction and shared experience.

Creating collective physical spaces is also, ultimately, a political act. To collectively shape space is to collectively shape our own ways of thinking and doing. Through shared spaces, we can change the way we think to be more collective, more open to change, more socially generous. Or, in other words, if we get used to changing the spaces around us, we can also become more adept at changing political and social realities.

Soft Surplus was started in March 2018 by Melanie Hoff, Austin Smith, and myself, and as of this writing is collectively gardened by: Andres Chang, Angeline Meitzler, Ann Haeyoung, Austin Wade Smith, beck haberstroh, Callil Capuozzo, Dan Taeyoung, Denny George, Édouard Urcades, Elizabeth Hénaff, Fei Liu, Jonathan González, Grace Jooyei Lee, Katie Giritlian, Kevin Cadena, Lai Yi Ohlsen, Lily C. Wong, Lucy Siyao Liu, Melanie Hoff, Nahee Kim, Pamela Liou, Phyllis Ma, Sandra Atakora, Sophie Kovel, Taylor Zanke, and others.

The Prime Produce Apprentice Cooperative was started by Jerone Hsu, Chris Chavez, and myself, and is cooperatively cultivated by Alex Qin, Amelia Foster, Chris Chavez, Dan Taeyoung, David Isaac Hecht, Emlyn Medalla, Home H.C. Nguyen, Jerone Hsu, Joe Rinehart, Larnell Vickers, Leng L. Lim, Marcos Salazar, Michelle Jackson, Patrick Paul Garlinger, Qiana Mickie, Qinza Najm, Rosalind Zavras, Sabrina Suliman, Saks Afridi, Wally Patawaran, Yasmine Fequiere, Yuko Kudo, and Yvonne Chow.

For more ideas on keys as mediators, see Bruno Latour, “The Berlin Key or How To Do Things with Words,” in Matter, Materiality and Modern Culture, edited by P.M. Graves-Brown, 10-21. London: Routledge, 1993.

For much more on software logic as ideology, see Wendy Hui Kyong Chun, “On Software, or the Persistence of Visual Knowledge,” Grey Room 18 (2005): 26-51.

“I choose the term “conviviality” to designate the opposite of industrial productivity. I intend it to mean autonomous and creative intercourse among persons, and the intercourse of persons with their environment; and this in contrast with the conditioned response of persons to the demands made upon them by others, and by a man-made environment. I consider conviviality to be individual freedom realized in personal interdependence and, as such, an intrinsic ethical value.” Ivan Illich, Tools for Conviviality. London: Marion Boyars, 2001.

The views expressed here are those of the authors only and do not reflect the position of The Architectural League of New York.

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.