Digital Frictions

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.

We are celebrating 15 years — and counting — of stories that are deeply researched and deeply felt, that build a historical record of what the city has been.

Navigating without vision through a train station, down a sandy beach, or across a city intersection involves the attunement of other modes of perception, and perhaps the deployment of an array of assistive technologies: a cane, a dog, a smartphone, a textured walkway, a public interactive screen. Digital navigational tools offer non-sighted travelers greater independence and richer spatial data, but they introduce new risks and social frictions — from the threat of data breaches, to the emotional labor involved in remote verbal interpretation. Detailing the “avoidable infrastructural frictions imposed on Blind people by sighted decision-makers” — app and interaction designers, software engineers, architects, and planners among them — Chancey Fleet proposes that, in order to build a more inclusive world, we need to exceed the minimum standards for accessibility, and we can do so only by incorporating more Blind interlocutors into our planning meetings and onto our design teams. – SM

As you scroll down, listen to a series of conversations between the author and Blind colleagues and friends about their experiences with digital interfaces in public spaces.

“You know you’re going out there by yourselves, right?” says the lady in charge of the swan boats. “Yes and no,” I’m tempted to say, but I’ve learned that life is simpler when I resist the urge to trouble the assumptions of sighted strangers — especially the ones with clipboards.

There are Blind people who set sail on open water with nothing but a compass and a keen sense of direction. I am not one of those people. I’m a tourist pedaling an aquatic bicycle-swan around Orlando’s manufactured Lake Eola with a guide dog and a friend.

Blindness is, for me, a journey of linear discovery and granular illumination. The absence of a surveying glance makes space for other ways of knowing. Each tap of my cane returns an echo suggesting the dimensions and textures of a space, and affords an extended sense of touch that reveals whether my path is flat or terraced, marble or mud. Birdsong, low to the ground, implies a hedge; a loose coalition of rolling bags mark the entrance to Penn Station; percussive feet on metal flag the stairs up to the High Line. Fiberglass hulls jostling wooden boards near the muted bustle of a ragged line indicate the dock.

Once we’re settled in the boat, I open an app called Blindsquare and drop a virtual breadcrumb: We’re here, at this dock. Breadcrumbing is a feature common to navigation apps for Blind people (there is Overthere, Soundscape, and many more — each with its own mechanics). Whenever I open the app and point my phone in a specific direction (as though the top holds an arrow) I’ll hear that breadcrumb call out its location in distance and degrees. Like most consumer-focused GPS services, this one is accurate to ten yards on a good day. If I wanted to return to a particular intersection, restaurant, or shop, I’d probably find it in the map data that my GPS apps harvest from crowdsourced databases like Foursquare, though places without names aren’t on my map until I add them myself.

As we steer our little boat away from the dock, I notice the low bumps and rustles of other boats returning; the sun at my left shoulder; music punctuated by a blender making far-off margaritas; and a gradual dwindling of all that sound, as we peddle toward the middle of the lake.

There’s a third person in the boat, sort of — he’s a visual interpreter on a digital platform called Aira. He can peer through my phone’s camera, track my location, and refer to the web, all to build a nuanced interpretation of anything I’d like described. Today he helps me take photos: the trees reflected on water in the distance, a little formation of three other boats passing by, and the lighted, multicolored fountain in the center of the lake. And when I’m close to the dock, bobbing in ten square yards of digital uncertainty, he uses concise, clock-based directions to help me steer back in.

Chancey talks with her friend Cayte, who defines precisely what “digital friction” in the built environment entails for many Blind people.

As a tech educator, it’s rare to spend a day amidst people acculturated to vision — whether they’re sighted or newly Blind — without hearing that the technology we use is amazing, and that we are. Each time, I’m tempted to answer, “yes and no.” All technology is amazing: It inspires joy and frustration, reveals and occludes pieces of our world, bends our habits, reflects our culture, and engenders longing for an iteratively more perfect world.

In the Blind community, you’ll sometimes hear the phrase “good traveler”: it describes someone well versed in the techniques of orientation and mobility, a perceptive and confident problem-solver, a reflexive observer of address patterns, and a person with a strong memory for directions. I think of myself as a chaotic good traveler: game to go anywhere, confident but spacy, unduly excited for street fairs and sculptures, and inconveniently bored by conversations about the relative whereabouts of roads and rails.

In 2003, I bought my first GPS system and exalted in a luxury that sighted readers may underrate: street signs stored outside my brain. The data about our physical environment has become richer every year. Early map data favored motorists but now includes lots of pedestrian paths and transit information, which suits my non-driving life. Stores and restaurants increasingly list their products and menus online. Although these listings are meant for an audience at a distance, they also create a neat accessible package of information that most visitors discover visually. For the subjective color of a peek through a window (the ambiance, the merchandise, how people dress) Yelp reviews are indispensable.

To avail myself of these everyday discoveries, I need to be confident and quick at operating a smartphone nonvisually. iOS and Android both have built-in, full-featured screen readers that work efficiently and selectively. Once a screen reader is activated, we use gestures or keyboard commands to move from place to place on screen, understanding where we are via text-to-speech or a Braille display. Blind people use a mix of niche and mainstream apps. Microsoft’s Soundscape, designed with Blind travel in mind, calls out the names of approaching intersections and landmarks, voiced from the points of a virtual clock face or compass. If you wear bone conduction headphones (which don’t cover your ears to occlude the sound around you, but instead let information from your phone blend with the ambient sounds of wherever you are), the concise, directional cues from Soundscape amount to virtual signs that you can notice without ever taking your phone out of your pocket.

Learning how to use a smartphone — from the fundamentals of reading and interacting with elements in the interface, to the particulars of deftly applying a portfolio of apps like a multitool for different travel situations — is easier if, like me, you kind of dig reading manuals and testing their claims; or if you can find confident Blind people to show you the moves. The gestures we use to navigate our touchscreens are unfamiliar enough to fluster an unsuspecting sighted person (a single tap reads, a double tap activates, and we have distinct gestures for spelling and reading by line). Asking a user of standard gestures to help you with your phone is like relying on sighted travel tips for nonvisual travel. Blind and sighted people can share knowledge, but it’s easiest to learn nonvisual techniques from people who already know them well. Effective, confident travel with a long white cane or a guide dog — complemented by a tableau of sound, texture, memory and inference — is learned through countless hours of discovery and refinement, just as sighted people learn nuances of travel as they grow. Whether we’re born to Blindness or experience it as an interruption of comfortable habits, our confidence as Blind travelers grows when the people around us (Blind or sighted) believe in our potential and right to move freely; when we have means to learn effective nonvisual techniques for perception and navigation; and when we travel frequently enough that our success becomes unremarkable to ourselves.

As I consider the avoidable infrastructural frictions imposed on Blind people by sighted decision-makers — illegible signage and wayfinding, mumbled transit announcements, and inaccessible digital amenities, to name a few — I reflect that our collective ability to improve nonvisual access to the public spaces of the future is blunted because so many of us are absent from public spaces today. Confined by the conviction that the joy of spontaneous independent travel is beyond our inherent capacity, many of us don’t agitate for incremental changes to the built and digital environments that would reduce ambiguity and nuisance, and increase discovery and ease. The project of liberation through education in nonvisual travel techniques involves uprooting paternalistic systems and cultural assumptions that run deep. As we consider frictions imposed by spaces and technology built by people, we must hold the truth that they are entangled with our expectations for ourselves and one another.

Chancey hears from her friend Nihal about an encounter with one bank’s not-so-accessible digital interface.

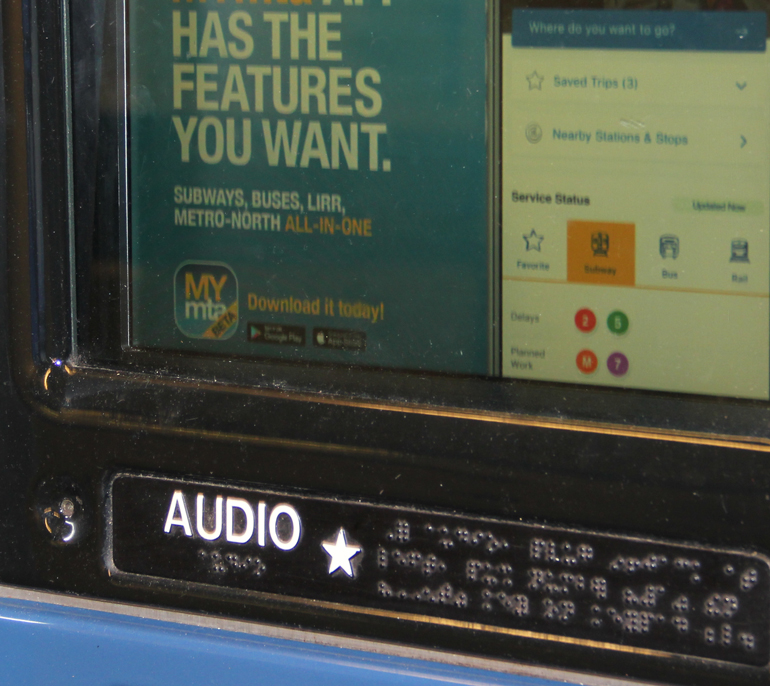

In a transit hub, commercial establishment, or professional conference, I spend too much time creatively confronting the absence of signs and maps. A typical traveler can disembark from a plane and follow signs through walkways, escalators and trams to find their bags and their way home. A few airports offer a parallel structure for wayfinding via Bluetooth beacons detectable with a smartphone app, but these are not the norm — and their level of maintenance, completeness, and accuracy reflects their slow and scattershot adoption. Although the interactive touchscreens a passenger uses to check in and retrieve a boarding pass or train ticket are increasingly accessible via headphones and a Blind-friendly gesture set, this accessibility is almost always missing from other public touchscreens. Airport hamburger stands, digital museum guides, and even the interactive wayfinding screens in New York City subway stations are all hostile to even basic nonvisual use.

This comes to pass despite ten years of mobile touchscreens, enthusiastically adopted by Blind people, capable of rendering intelligible the same interactive menus, keyboards, text, and spatially related points that sighted travelers find on public screens. Our access to information lags behind what can technically be achieved because many developers are unfamiliar with, and overestimate the difficulty of ensuring, digital accessibility. Legal requirements for creating accessible digital tools are often unclear and unenforced, while not all Blind people are confident enough in public spaces to feel the import of our rights.

So, as I disembark from a plane, I ask the gate agent for directions to baggage claim. Receiving these directions — take a left, go about a hundred yards and find the escalator on your right, then head down — is a rare treat. It’s much more common for the gate agent, understandably struggling to say something sensible without reference to pointing or signs, to entreat me to stand waiting at the gate while they summon a worker I can follow. Spaces devoid of nonvisual signage and guidance cause trouble not just for Blind people, but for anyone whose job it is to orient people passing through. As I decline the offer of a personal escort through the airport and instead ask the people I pass about nearby gate numbers and landmarks, I know that most of them think I’m asking because I’m Blind. Most of the time, though, I’m asking because the digital infrastructure of a public space only deigns to speak to sighted travelers.

If you’ve ever encountered a vending machine that contemptuously spits back all but the crispest of dollars or an office copier with a labyrinthine menu and a skittish touchscreen, you already know that the presence of a feature does not guarantee its reliability or ease. Nonvisual workflows built into public digital amenities often betray a certain lack of fluency in or dedication to “good” experiences that don’t revolve around looking at screens. Sighted New Yorkers partake of a mostly tolerable Metrocard kiosk experience, with a touchscreen that offers service in multiple languages and prominently displays the most frequently requested options to keep transactions relatively quick. Blind commuters must bring their own headphones to plug into an invariably dusty jack, press the code “1#” on the machine’s dial pad, and listen to a robotic voice first synthesized during or before the 1990s. This odd digital companion guides the user through a tree of dial-up menus, as slowly as the voice of any phone tree. The only way to navigate this interaction quickly is to memorize the series of five or six numbers that leads to the option you want. Verbose, finicky, and deprecated interactions also lurk within many taxicab seat-back tablets, ATMs, and assorted ticketing machines. I feel a low-key dread whenever I join a line to use an unfamiliar public kiosk because I know my transaction will take me longer, and I imagine the people behind me will blame Blindness rather than clunky design.

When designers of public digital amenities proceed as though any accessible solution is good enough for Blind people, they can build “solutions” that produce not only inefficiency but bizarre implications that might provoke controversy if applied to “normal” users. In a hotel in Anaheim, I found myself treated to what I’ll call the “Destination: Harassment Elevator.” Most riders pressed their desired floor in the elevator lobby, then watched for a sign to indicate which elevator they should board, allowing for more efficient trips. In contrast, Blind people pushed a special button and then keyed in their floor, which was announced in a loud, strident voice. When a Blind person’s elevator arrived, the same voice proclaimed their floor again, along with the location of the particular elevator car going there. Elevators carrying Blind riders were coded to take about twice as long to close their doors as elevators without riders who had pressed the special button. In the mid-day chaos of a conference, I felt bad for making an elevator slower, and a bit piqued that an engineer somewhere had encoded the assumption that Blind people are slow. (Slow is a feature for some Blind people… and folks with small children or unwieldy luggage. Couldn’t “slow” have its own button?) In the quieter evening hours, I found the booming announcement of my presence, my floor, and my specific elevator so disconcerting that I just keyed in my floor without invoking this “feature” and hoped for the best. Although I am glad that more public amenities than ever have some nonvisual means of use — we’ve come a long way — I look forward to the day when public digital interactions are crafted not just to comply with accessibility laws and standards, but to make me feel as capable, welcome, and unremarkable as other people passing through.

Chancey speaks with Cayte and Nihal about the trials and tribulations of accessibility technology in New York City’s public transit system.

In the past five years, a new form of environmental accessibility for Blind people has come to our smartphones: remote visual interpretation. Some interpreter apps describe pictures: TapTapSee uses computer vision to return a short description of an object in seconds, and BeSpecular crowdsources multiple answers from volunteers who offer subjective, rich descriptions that are great for shopping for and choosing outfits, sightseeing, and understanding nuance in photos. Other apps, like BeMyEyes and Aira, create a live connection between a Blind person and a volunteer or paid interpreter. Pairing a live camera view with a conversation is a powerful tool: I’ve used this method to find a trailhead in a park, shop for theme socks, get groceries, move swiftly through airports, monitor my 3D printer, wrangle my office copier, and learn from completely silent origami videos. Visual interpreter technology has granted me the latitude to do some things more quickly or spontaneously than I otherwise might. I’ve never loved asking for assistance in stores when I’m just looking, and working with an interpreter helps me peek around without feeling somehow committed to making a purchase. I’m way more willing to wander through an art museum with family and friends now that I can access descriptions and decide what to focus on, rather than tether my focus to someone else’s whims. Disengaging access to information from the motivations, priorities, and favors of the people around me is one hell of a drug.

But this technology requires plenty of battery power, mobile data, and mobile broadband signal strength. It also calls for plenty of emotional labor on both sides of the equation: Blind people have to blend giving direction with a certain amount of patience, and interpreters have to gaze wherever the camera goes and spin their visual perceptions into clear, concise verbal description in real-time. Frustration can arise over latency, choppy audio and video streams, differing points of reference, and challenging moments in communication.

Most visual interpreter apps — whether they use pictures or live video, whether powered by computer vision or human beings — have Terms of Service and Privacy Policies every bit as baroque, obtuse, and alarming as those of cloud-connected services designed for the general population. Aira, BeMyEyes, TapTapSee, and BeSpecular retain the content of our sessions for a long time (ranging from 18 months to indefinite). Most of these apps can leverage that data, which may contain audio, video, and metadata like location, for a range of corporate purposes. Each one of these services is constructed to give us asymmetrical access to our own data: None allows us to directly replay, copy, export, or delete our sessions.

Although I can, with effort, use third-party apps to record and save a session, these apps aren’t designed to make it easy, and I almost never do it. So, memories mediated and inflected by interpretation get lost. Once, while I was traveling in the sleeper car of a train and trying to figure out which of two stations was closest to our hotel, an interpreter happened to catch my husband’s curly head and GoPro popping up to get my picture. “Let’s take his picture right back,” I said. In the single best line of description I’ve ever received, my deadpan interpreter replied: “I’m sorry. We can’t. He ran away like a cat from a sprinkler.” I’d give a lot to revisit that moment as it unfolded.

Chancey meets Dawn, who shares a story about an awkward encounter with her own digitally-reproduced image.

Although many people in the Blind community welcome the advent of visual interpreter apps, we know that, like any technology, their consequences will ripple out in unpredictable ways. Will our data ever be acquired, exposed in a hack, or used against us in court? Will Blind people lean on interpretation at moments where learning nonvisual techniques for doing a task would be in our better interest? Will websites, museums, or shops ever construe the availability of remote interpreters as a panacea that erases their obligation to make themselves more natively accessible? Journalists covering the complications of ride-sharing, social networks, and the rest of the tech industry nonetheless tend to cover visual interpretation breathlessly and uncritically. There is conflict between my love for the liberatory moments enabled by these apps and my desire for them all to be more transparent, symmetrical, and fair in their engagement with the data of our daily lives.

I opened with a silly story about using a bunch of apps to steer a boat around a lake to rattle you out of some reflexive assumptions that, after meeting thousands of you, I imagine you might have: that it is amazing when Blind people use technology, that it is harder for us because not seeing is hard, that accessibility is liberation, and that all accessible designs are good. Whether you work in development or design, have a Blind friend or colleague, or just travel through public spaces with us as a potential ally, I invite you to check whether you’re carrying any of these assumptions. If you are, please test them against what you see in our culture, our digital amenities, and emerging platforms. Be skeptical about press releases announcing the next innovation designed “for” us. If something you know we need is broken and also affecting you (mumbled subway announcements, for instance?) raise your voice for its maintenance and care. Engage us in direct conversation about our priorities and — when you’re in a position to do so — hire us, or make the introductions that can bring more of us into the development and design pipeline. Informed, critically engaged allies can help us build a future of richer, more effective multi-sensory digital engagements for everyone.

The views expressed here are those of the authors only and do not reflect the position of The Architectural League of New York.

Examining the points of abrasion in the so-called smart city: where code meets concrete and where algorithms encounter forms of intelligence that aren't artificial.

Comments